Human Struggle In A Time Of AI Hype

One of the selling points of AI tools and agents is that they save or free up your time, so you don't have to board the strugglebus. But is the ride truly not worth it?

It had been an hour, and I was well and truly stumped with the latest Python code assignment I'd been given for 100 Days of Code from Dr. Angela Yu. Gobsmacked. Cooked, perhaps, as the kids will say these days.

Like all the other days in this course, the assignment challenged me to make something substantive and real - in this case, a game like Frogger, the classic Atari amphibian car crossing game. But unlike all the others to this point, I hadn't just breezed through solutions using the dusty foundation in my brain that once took programming classes as a Computer Science minor. I was well and truly puzzled.

My cars were more like monster trucks, generated way too big. Not only that, when the difficulty was supposed to increase, they were created moving at the speed of light, like a bunch of Bigfoots and Grave Diggers were given the warp nacelles from the Enterprise. Not that it mattered to my turtle, who I discovered was functionally immortal, and unable to generate a game over condition by being splatted by one of my maximum warp-capable trucks.

Eventually, after trying, failing, walking away to do the dishes, and coming back, I figured it out. I was using an attribute to set the length and height of my cars the wrong way. I was incrementing the movement speed of my cars straight to the fastest speed instead of using some randomness to generate them. And I'd foolishly set the collision for my turtle then wiped it out immediately, running another function.

This is probably where all those ads where you see a sufficiently nerdy-looking programmer or a mildly attractive influencer tell the camera that I could have saved a ton of time just by feeding it into AI.

Code your solution in hours instead of days, they say. Find the time to do the other things you want to do, they gush as they pantomime a casual and fun and not-at-all coding activity they get to do because they plopped their thoughts into ChatGPT, or Claude. Cue nodding and smiling at the camera in what I've honestly all but dubbed the 2026 version of Kid Giving Thumbs Up. You get the idea.

All of these things have generally the same theme - skip the struggle. Stop agonizing over the code. Don't waste your time with the Sisyphean effort of bugfixing, failing to bugfix, breaking something else while bugfixing, and finally getting something workable. The idea is that the struggle is wrong - nay, wasteful, in the context of limited human hours in a limited human lifespan.

While I think in some contexts the idea has merit, I think that idea is also, possibly, dangerous.

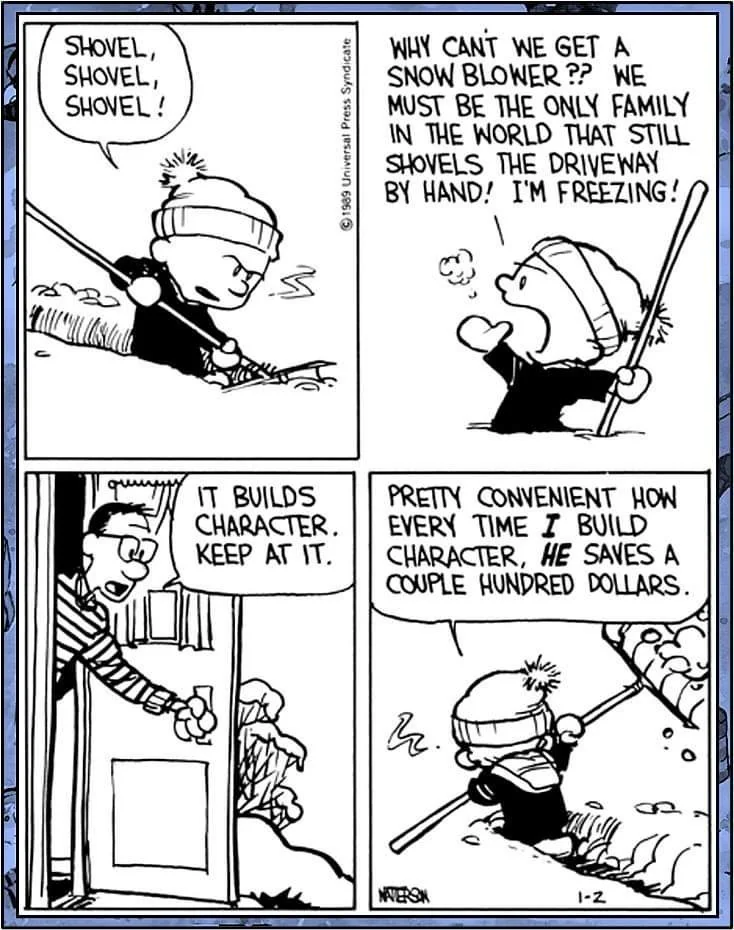

I love Calvin and Hobbes, partly because Calvin is clearly ahead of his time in many ways, even though he's still obviously a kid. One theme that comes up is the idea from Calvin's dad that something that seems difficult and a pain is character-building. It's used frequently as a joke, and it's not like Calvin doesn't have a legitimate point. I can't say that I didn't look jealously across the street at a snowblower running while I was shoveling the sidewalk as a kid.

Yet now as an adult, I see Calvin's dad's point - it isn't about the chore, but about what the chore represents - the idea of being responsible enough to make sure that you're not wading through Hoth when it snows, that it's good to clear a path for you, your family and your neighbors, how to shovel quickly enough to minimize time outside, and all that adult-y stuff. You could buy the snowblower and learn all that too, to a certain, smaller extent, but you'd also be pretty dependent on the snowblower to do the work.

I'm sure some of you saw what I just did there, but that's not the whole picture.

I recently stumbled upon a really excellent, balanced take on the impact of AI tools written by Dr. Minas Karamanis, an astrophysicist who has observed first-hand how it has affected his colleagues, but more importantly, up-and-coming students. His point is that at its base, the use of and proliferation of AI tools (ethical, legal, and moral debates aside) is mostly fine, and even at times useful, but what it is teaching (or in this case not teaching) students to do, is potentially a problem, even if the results are functionally the same:

When I see junior PhD students entering the field now, I see something different. I see students who reach for the agent before they reach for the textbook. Who ask Claude to explain a paper instead of reading it. Who ask Claude to implement a mathematical model in Python instead of trying, failing, staring at the error message, failing again, and eventually understanding not just the model but the dozen adjacent things they had to learn in order to get it working. The failures are the curriculum. The error messages are the syllabus. Every hour you spend confused is an hour you spend building the infrastructure inside your own head that will eventually let you do original work. There is no shortcut through that process that doesn't leave you diminished on the other side.

If you've read to this point, you'd think I was staunchly anti-AI, but I'm not. If you're super anti-AI and you are about to close this article in disgust, that's totally fine and fair - but at minimum, at least consider my thoughts first. There is value to the tools in the sense that, stripped away from all the keynotes and the ads and the AI evangelism, it's no different from other "hyped" technologies of the past. The hype around the tools is obscene, but I feel even after the bubble bursts (and I truly feel like it will, because nobody likes to see, say, hundreds or thousands of dollars for simple PC upgrades because the AI companies Hoovered them up like Kirby), it'll probably settle into more realistic goals and outcomes.

To be clear, I use AI tools in very specific contexts:

- Never to generate content or media, but sometimes to double-check what I make for dumb grammar and logistical mistakes

- Efficiently search asking for sources that I always vet (because web search, even setting aside AI-assisted answers, has gone down the tubes)

- Test them for practical use (because like many places, everyone is curious about common-sense applications, and it's my job in part to know what's there).

Don't get me wrong - the aforementioned ethical, legal, and moral issues need to be addressed - the lack of compensation towards creatives, the unsustainable model of expansion, the constant overreach affecting things like the environment or everyday items, and most of all, the effect it has had as it's attempted to replace (rather than enhance) significant parts of human labor.

If you use AI a ton, espouse its benefits, work with it because it honestly helps you and there's value, that's ok. Likewise, if you will never, ever touch an AI tool in your life, and you find the whole idea to be abhorrent, then that's ok too. There are lots of experiences, they're all a part of the debate behind how they've become more present lately, and individually and collectively, folks will have to make decisions about where (or if) a red line exists where they find the current trajectory unacceptable. Speaking just for me, mine happens to be giving up ownership of my agency to learn, even if that learning comes with a lot of confusion, mistakes, and repetition.

As a result, I have ended up with a nuanced viewpoint about this stuff, and I think Dr. Karamanis does, too. Like him, I think the extremes ignore the middle, where results ultimately happen. But what's the result? Here's what Dr. Karamanis has to say at the end of his article:

Five years from now, Alice will be writing her own grant proposals, choosing her own problems, supervising her own students. She'll know what questions to ask because she spent a year learning the hard way what happens when you ask the wrong ones. She'll be able to sit with a new dataset and feel, in her gut, when something is off, because she's developed the intuition that only comes from doing the work yourself, from the tedious hours of debugging, from the afternoons wasted chasing sign errors, from the slow accumulation of tacit knowledge that no summary can transmit.

Bob will be fine. He'll have a good CV. He'll probably have a job. He'll use whatever the 2031 version of Claude is, and he'll produce results, and those results will look like science.

I'm not worried about the machines. The machines are fine. I'm worried about us.

What Dr. Karamanis puts forth, and what I'd agree with him on, is that the true peril at the heart of all this is what happens to the humans, not the machines.

I'm less worried about us older folks, who, like anything else novel or hyped over the years, have been exposed to, used (or not used), and discarded lots of tech. If anything, my struggle to deal with the fact that my Discman kept skipping my CDs despite "anti-skip" tech, that it took me two days to figure out why my first Wordpress site couldn't stop accepting spambot comments, and that I wondered why my Blackberry kept syncing copies of my mail over and over again has prepared me for any struggle bus ride (or the lack thereof) involving AI tool use.

But it's younger people that I worry for, the ones who didn't have the luxury (or pain, you pick) of not growing up with this kind of tech in their hands.

People who are tempted, as Dr. Karamanis writes, to not "build the infrastructure" in their brains that can only come from making mistakes, failing, and eventually learning how not to repeat mistakes.

People who reach for an agent to summarize something for them, to solve something for them, rather than absorbing, reading, failing to understand, then eventually having an insight that only that process teaches them to do.

People who might have trouble coping with, or actively work to avoid, struggle when it inevitably faces them in life.

And that's weird, because we're constantly fed that triumph over adversity, or testing our resilience through struggle makes us better as humans. We see it in movies, in shows, in stories of regular humans, and in life that we experience every day.

Christopher Nolan spent three movies making Batman's suffering into meaningful pathos anchored by some of the most iconic lines delivered by Michael Caine's Alfred.

If you read me for games, Expedition 33's entire concept surrounded the good and bad ways we deal with adversity because of grief, with the added bonus of a truly banger soundtrack.

And speaking of music, if you're one of my K-Pop subscribers, EJae from K-Pop Demon Hunters recently said of her journey of not getting to be an idol and climbing back up that "rejection is redirection".

If we always skip the struggle when it comes to how we learn, just because we want to save a few hours or days back to let a machine do it for us, then we don't really learn much at all, except that we need the machine. And when the machine breaks, or it's not available, or it messes up what it's supposed to fix, some people who didn't learn through screwing up a few times are probably up a creek with no paddle and arguably, no boat.

It took maybe another two hours for me to fix my imitation Frogger program. When I found out what I'd done wrong, corrected it, and watched my program execute exactly the way it was supposed to, I felt a sense of satisfaction over the whole thing - not just for the fact that it was running, but that I learned something about messing up that helped me get it to run.

Could I have gotten an AI agent to write it for me, and maybe even explain it? Sure.

Could I maybe have understood it to a certain degree and moved on to future lessons faster? Probably.

But could I have built the foundation in my brain, one that only comes from trying, failing, and trying again, that could help me later? That's what's irreplaceable - at least until we start knowing Kung Fu at the press of a button, anyway - and it's something that served me five lessons later and beyond. I dodged another hours-long session on a similar problem for a project because I knew how I had screwed up the first time, and applied what I learned to make things better. That's irrevocably mine, and not the AI tool's, and I think I'm better off for it and all the iterations it took to get there.

We should embrace those iterations, and the inevitable struggles that eventually lead to understanding, and with it, that unique power the human brain has to connect both knowledge and the emotional journey of attaining that knowledge into something great. That's not me waxing poetic - it's what's real, and one need only look to history to see many, many examples of it in action.

To abdicate that because we are afraid to fail is to risk never being equipped to deal with failing or struggling at all. And that's a more perilous outcome.